Student's t-test

A t-test is a statistical hypothesis test used to test whether the difference between the response of two groups is statistically significant or not. It is any statistical hypothesis test in which the test statistic follows a Student's t-distribution under the null hypothesis. It is most commonly applied when the test statistic would follow a normal distribution if the value of a scaling term in the test statistic were known (typically, the scaling term is unknown and is therefore a nuisance parameter). When the scaling term is estimated based on the data, the test statistic—under certain conditions—follows a Student's t distribution. The t-test's most common application is to test whether the means of two populations are significantly different. In many cases, a Z-test will yield very similar results to a t-test since the latter converges to the former as the size of the dataset increases.

History

The term "t-statistic" is abbreviated from "hypothesis test statistic".[1] In statistics, the t-distribution was first derived as a posterior distribution in 1876 by Helmert[2][3][4] and Lüroth.[5][6][7] The t-distribution also appeared in a more general form as Pearson type IV distribution in Karl Pearson's 1895 paper.[8] However, the t-distribution, also known as Student's t-distribution, gets its name from William Sealy Gosset, who first published it in English in 1908 in the scientific journal Biometrika using the pseudonym "Student"[9][10] because his employer preferred staff to use pen names when publishing scientific papers.[11] Gosset worked at the Guinness Brewery in Dublin, Ireland, and was interested in the problems of small samples – for example, the chemical properties of barley with small sample sizes. Hence a second version of the etymology of the term Student is that Guinness did not want their competitors to know that they were using the t-test to determine the quality of raw material. Although it was William Gosset after whom the term "Student" is penned, it was actually through the work of Ronald Fisher that the distribution became well known as "Student's distribution"[12] and "Student's t-test".

Gosset devised the t-test as an economical way to monitor the quality of stout. The t-test work was submitted to and accepted in the journal Biometrika and published in 1908.[9]

Guinness had a policy of allowing technical staff leave for study (so-called "study leave"), which Gosset used during the first two terms of the 1906–1907 academic year in Professor Karl Pearson's Biometric Laboratory at University College London.[13] Gosset's identity was then known to fellow statisticians and to editor-in-chief Karl Pearson.[14]

Uses

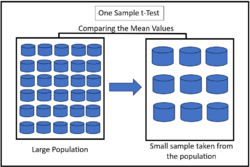

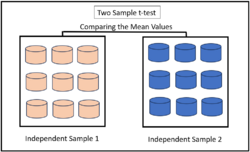

The most frequently used t-tests are one-sample and two-sample tests:

- A one-sample location test of whether the mean of a population has a value specified in a null hypothesis.

- A two-sample location test of the null hypothesis such that the means of two populations are equal. All such tests are usually called Student's t-tests, though strictly speaking that name should only be used if the variances of the two populations are also assumed to be equal; the form of the test used when this assumption is dropped is sometimes called Welch's t-test. These tests are often referred to as unpaired or independent samples t-tests, as they are typically applied when the statistical units underlying the two samples being compared are non-overlapping.[15]

Assumptions

Most test statistics have the form t = Z/s, where Z and s are functions of the data.

Z may be sensitive to the alternative hypothesis (i.e., its magnitude tends to be larger when the alternative hypothesis is true), whereas s is a scaling parameter that allows the distribution of t to be determined.

As an example, in the one-sample t-test

- [math]\displaystyle{ t = \frac{Z}{s} = \frac{\bar{X} - \mu}{\hat\sigma / \sqrt{n}}, }[/math]

where X is the sample mean from a sample X1, X2, …, Xn, of size n, s is the standard error of the mean, [math]\displaystyle{ \hat\sigma }[/math] is the estimate of the standard deviation of the population, and μ is the population mean.

The assumptions underlying a t-test in the simplest form above are that:

- X follows a normal distribution with mean μ and variance σ2/n.

- s2(n − 1)/σ2 follows a χ2 distribution with n − 1 degrees of freedom. This assumption is met when the observations used for estimating s2 come from a normal distribution (and i.i.d. for each group).

- Z and s are independent.

In the t-test comparing the means of two independent samples, the following assumptions should be met:

- The means of the two populations being compared should follow normal distributions. Under weak assumptions, this follows in large samples from the central limit theorem, even when the distribution of observations in each group is non-normal.[16]

- If using Student's original definition of the t-test, the two populations being compared should have the same variance (testable using F-test, Levene's test, Bartlett's test, or the Brown–Forsythe test; or assessable graphically using a Q–Q plot). If the sample sizes in the two groups being compared are equal, Student's original t-test is highly robust to the presence of unequal variances.[17] Welch's t-test is insensitive to equality of the variances regardless of whether the sample sizes are similar.

- The data used to carry out the test should either be sampled independently from the two populations being compared or be fully paired. This is in general not testable from the data, but if the data are known to be dependent (e.g. paired by test design), a dependent test has to be applied. For partially paired data, the classical independent t-tests may give invalid results as the test statistic might not follow a t distribution, while the dependent t-test is sub-optimal as it discards the unpaired data.[18]

Most two-sample t-tests are robust to all but large deviations from the assumptions.[19]

For exactness, the t-test and Z-test require normality of the sample means, and the t-test additionally requires that the sample variance follows a scaled χ2 distribution, and that the sample mean and sample variance be statistically independent. Normality of the individual data values is not required if these conditions are met. By the central limit theorem, sample means of moderately large samples are often well-approximated by a normal distribution even if the data are not normally distributed. For non-normal data, the distribution of the sample variance may deviate substantially from a χ2 distribution.

However, if the sample size is large, Slutsky's theorem implies that the distribution of the sample variance has little effect on the distribution of the test statistic. That is, as sample size [math]\displaystyle{ n }[/math] increases:

- [math]\displaystyle{ \sqrt{n}(\bar{X} - \mu) \xrightarrow{d} N(0, \sigma^2) }[/math] as per the Central limit theorem,

- [math]\displaystyle{ s^2 \xrightarrow{p} \sigma^2 }[/math] as per the law of large numbers,

- [math]\displaystyle{ \therefore \frac{\sqrt{n}(\bar{X} - \mu)}{s} \xrightarrow{d} N(0, 1) }[/math].

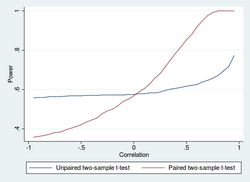

Unpaired and paired two-sample t-tests

Two-sample t-tests for a difference in means involve independent samples (unpaired samples) or paired samples. Paired t-tests are a form of blocking, and have greater power (probability of avoiding a type II error, also known as a false negative) than unpaired tests when the paired units are similar with respect to "noise factors" (see confounder) that are independent of membership in the two groups being compared.[20] In a different context, paired t-tests can be used to reduce the effects of confounding factors in an observational study.

Independent (unpaired) samples

The independent samples t-test is used when two separate sets of independent and identically distributed samples are obtained, and one variable from each of the two populations is compared. For example, suppose we are evaluating the effect of a medical treatment, and we enroll 100 subjects into our study, then randomly assign 50 subjects to the treatment group and 50 subjects to the control group. In this case, we have two independent samples and would use the unpaired form of the t-test.

Paired samples

Paired samples t-tests typically consist of a sample of matched pairs of similar units, or one group of units that has been tested twice (a "repeated measures" t-test).

A typical example of the repeated measures t-test would be where subjects are tested prior to a treatment, say for high blood pressure, and the same subjects are tested again after treatment with a blood-pressure-lowering medication. By comparing the same patient's numbers before and after treatment, we are effectively using each patient as their own control. That way the correct rejection of the null hypothesis (here: of no difference made by the treatment) can become much more likely, with statistical power increasing simply because the random interpatient variation has now been eliminated. However, an increase of statistical power comes at a price: more tests are required, each subject having to be tested twice. Because half of the sample now depends on the other half, the paired version of Student's t-test has only n/2 − 1 degrees of freedom (with n being the total number of observations). Pairs become individual test units, and the sample has to be doubled to achieve the same number of degrees of freedom. Normally, there are n − 1 degrees of freedom (with n being the total number of observations).[21]

A paired samples t-test based on a "matched-pairs sample" results from an unpaired sample that is subsequently used to form a paired sample, by using additional variables that were measured along with the variable of interest.[22] The matching is carried out by identifying pairs of values consisting of one observation from each of the two samples, where the pair is similar in terms of other measured variables. This approach is sometimes used in observational studies to reduce or eliminate the effects of confounding factors.

Paired samples t-tests are often referred to as "dependent samples t-tests".

Calculations

Explicit expressions that can be used to carry out various t-tests are given below. In each case, the formula for a test statistic that either exactly follows or closely approximates a t-distribution under the null hypothesis is given. Also, the appropriate degrees of freedom are given in each case. Each of these statistics can be used to carry out either a one-tailed or two-tailed test.

Once the t value and degrees of freedom are determined, a p-value can be found using a table of values from Student's t-distribution. If the calculated p-value is below the threshold chosen for statistical significance (usually the 0.10, the 0.05, or 0.01 level), then the null hypothesis is rejected in favor of the alternative hypothesis.

One-sample t-test

In testing the null hypothesis that the population mean is equal to a specified value μ0, one uses the statistic

- [math]\displaystyle{ t = \frac{\bar{x} - \mu_0}{s/\sqrt{n}}, }[/math]

where [math]\displaystyle{ \bar x }[/math] is the sample mean, s is the sample standard deviation and n is the sample size. The degrees of freedom used in this test are n − 1. Although the parent population does not need to be normally distributed, the distribution of the population of sample means [math]\displaystyle{ \bar x }[/math] is assumed to be normal.

By the central limit theorem, if the observations are independent and the second moment exists, then [math]\displaystyle{ t }[/math] will be approximately normal [math]\displaystyle{ N(0; 1) }[/math].

Slope of a regression line

Suppose one is fitting the model

- [math]\displaystyle{ Y = \alpha + \beta x + \varepsilon, }[/math]

where x is known, α and β are unknown, ε is a normally distributed random variable with mean 0 and unknown variance σ2, and Y is the outcome of interest. We want to test the null hypothesis that the slope β is equal to some specified value β0 (often taken to be 0, in which case the null hypothesis is that x and y are uncorrelated).

Let

- [math]\displaystyle{ \begin{align} \hat\alpha, \hat\beta &= \text{least-squares estimators}, \\ SE_{\hat\alpha}, SE_{\hat\beta} &= \text{the standard errors of least-squares estimators}. \end{align} }[/math]

Then

- [math]\displaystyle{ t_\text{score} = \frac{\hat\beta - \beta_0}{ SE_{\hat\beta} } \sim \mathcal{T}_{n-2} }[/math]

has a t-distribution with n − 2 degrees of freedom if the null hypothesis is true. The standard error of the slope coefficient:

- [math]\displaystyle{ SE_{\hat\beta} = \frac{\sqrt{\displaystyle \frac{1}{n - 2}\sum_{i=1}^n (y_i - \hat y_i)^2}}{\sqrt{\displaystyle \sum_{i=1}^n (x_i - \bar{x})^2}} }[/math]

can be written in terms of the residuals. Let

- [math]\displaystyle{ \begin{align} \hat\varepsilon_i &= y_i - \hat y_i = y_i - (\hat\alpha + \hat\beta x_i) = \text{residuals} = \text{estimated errors}, \\ \text{SSR} &= \sum_{i=1}^n {\hat\varepsilon_i}^2 = \text{sum of squares of residuals}. \end{align} }[/math]

Then tscore is given by

- [math]\displaystyle{ t_\text{score} = \frac{(\hat\beta - \beta_0) \sqrt{n-2}}{\sqrt{\frac{SSR}{\sum_{i=1}^n (x_i - \bar{x})^2}}}. }[/math]

Another way to determine the tscore is

- [math]\displaystyle{ t_\text{score} = \frac{r\sqrt{n - 2}}{\sqrt{1 - r^2}}, }[/math]

where r is the Pearson correlation coefficient.

The tscore, intercept can be determined from the tscore, slope:

- [math]\displaystyle{ t_\text{score,intercept} = \frac{\alpha}{\beta} \frac{t_\text{score,slope}}{\sqrt{s_\text{x}^2 + \bar{x}^2}}, }[/math]

where sx2 is the sample variance.

Independent two-sample t-test

Equal sample sizes and variance

Given two groups (1, 2), this test is only applicable when:

- the two sample sizes are equal,

- it can be assumed that the two distributions have the same variance.

Violations of these assumptions are discussed below.

The t statistic to test whether the means are different can be calculated as follows:

- [math]\displaystyle{ t = \frac{\bar{X}_1 - \bar{X}_2}{s_p \sqrt\frac{2}{n}}, }[/math]

where

- [math]\displaystyle{ s_p = \sqrt{\frac{s_{X_1}^2 + s_{X_2}^2}{2}}. }[/math]

Here sp is the pooled standard deviation for n = n1 = n2, and s 2X1 and s 2X2 are the unbiased estimators of the population variance. The denominator of t is the standard error of the difference between two means.

For significance testing, the degrees of freedom for this test is 2n − 2, where n is sample size.

Equal or unequal sample sizes, similar variances (1/2 < sX1/sX2 < 2)

This test is used only when it can be assumed that the two distributions have the same variance (when this assumption is violated, see below). The previous formulae are a special case of the formulae below, one recovers them when both samples are equal in size: n = n1 = n2.

The t statistic to test whether the means are different can be calculated as follows:

- [math]\displaystyle{ t = \frac{\bar{X}_1 - \bar{X}_2}{s_p \cdot \sqrt{\frac{1}{n_1} + \frac{1}{n_2}}}, }[/math]

where

- [math]\displaystyle{ s_p = \sqrt{\frac{(n_1 - 1)s_{X_1}^2 + (n_2 - 1)s_{X_2}^2}{n_1 + n_2-2}} }[/math]

is the pooled standard deviation of the two samples: it is defined in this way so that its square is an unbiased estimator of the common variance, whether or not the population means are the same. In these formulae, ni − 1 is the number of degrees of freedom for each group, and the total sample size minus two (that is, n1 + n2 − 2) is the total number of degrees of freedom, which is used in significance testing.

Equal or unequal sample sizes, unequal variances (sX1 > 2sX2 or sX2 > 2sX1)

This test, also known as Welch's t-test, is used only when the two population variances are not assumed to be equal (the two sample sizes may or may not be equal) and hence must be estimated separately. The t statistic to test whether the population means are different is calculated as

- [math]\displaystyle{ t = \frac{\bar{X}_1 - \bar{X}_2}{s_{\bar\Delta}}, }[/math]

where

- [math]\displaystyle{ s_{\bar\Delta} = \sqrt{\frac{s_1^2}{n_1} + \frac{s_2^2}{n_2}}. }[/math]

Here si2 is the unbiased estimator of the variance of each of the two samples with ni = number of participants in group i (i = 1 or 2). In this case [math]\displaystyle{ (s_{\bar\Delta})^2 }[/math] is not a pooled variance. For use in significance testing, the distribution of the test statistic is approximated as an ordinary Student's t-distribution with the degrees of freedom calculated using

- [math]\displaystyle{ \text{d.f.} = \frac{\left(\frac{s_1^2}{n_1} + \frac{s_2^2}{n_2}\right)^2}{\frac{(s_1^2/n_1)^2}{n_1 - 1} + \frac{(s_2^2/n_2)^2}{n_2 - 1}}. }[/math]

This is known as the Welch–Satterthwaite equation. The true distribution of the test statistic actually depends (slightly) on the two unknown population variances (see Behrens–Fisher problem).

Exact method for unequal variances and sample sizes

The test[23] deals with the famous Behrens–Fisher problem, i.e., comparing the difference between the means of two normally distributed populations when the variances of the two populations are not assumed to be equal, based on two independent samples.

The test is developed as an exact test that allows for unequal sample sizes and unequal variances of two populations. The exact property still holds even with small extremely small and unbalanced sample sizes (e.g. [math]\displaystyle{ n_1=5, n_2=50 }[/math]).

The statistic to test whether the means are different can be calculated as follows:

Let [math]\displaystyle{ X = [X_1,X_2,\ldots,X_m]^T }[/math] and [math]\displaystyle{ Y = [Y_1,Y_2,\ldots,Y_n]^T }[/math] be the i.i.d. sample vectors ([math]\displaystyle{ m\ge n }[/math]) from [math]\displaystyle{ N(\mu_1,\sigma_1^2) }[/math] and [math]\displaystyle{ N(\mu_2,\sigma_2^2) }[/math] separately.

Let [math]\displaystyle{ (P^T)_{n\times n} }[/math] be an [math]\displaystyle{ n\times n }[/math] orthogonal matrix whose elements of the first row are all [math]\displaystyle{ 1/\sqrt{n} }[/math], similarly, let [math]\displaystyle{ (Q^T)_{n\times m} }[/math] be the first n rows of an [math]\displaystyle{ m\times m }[/math] orthogonal matrix (whose elements of the first row are all [math]\displaystyle{ 1/\sqrt{m} }[/math]).

Then [math]\displaystyle{ Z:=(Q^T)_{n\times m}X/\sqrt{m}-(P^T)_{n\times n}Y/\sqrt{n} }[/math] is an n-dimensional normal random vector.

- [math]\displaystyle{ Z \sim N( (\mu_1-\mu_2,0,...,0)^T , (\sigma_1^2/m+\sigma_2^2/n)I_n). }[/math]

From the above distribution we see that

- [math]\displaystyle{ Z_1=\bar X-\bar Y=\frac1m\sum_{i=1}^m X_i-\frac1n\sum_{j=1}^n Y_j, }[/math]

- [math]\displaystyle{ Z_1-(\mu_1-\mu_2)\sim N(0,\sigma_1^2/m+\sigma_2^2/n), }[/math]

- [math]\displaystyle{ \frac{\sum_{i=2}^n Z^2_i}{n-1}\sim \frac{\chi^2_{n-1}}{n-1}\times\left(\frac{\sigma_1^2}{m}+\frac{\sigma_2^2}{n}\right) }[/math]

- [math]\displaystyle{ Z_1-(\mu_1-\mu_2) \perp \sum_{i=2}^n Z^2_i. }[/math]

- [math]\displaystyle{ T_e := \frac{ Z_1-(\mu_1-\mu_2) }{ \sqrt{ (\sum_{i=2}^{n} Z^2_i) /(n-1) } } \sim t_{n-1}. }[/math]

Dependent t-test for paired samples

This test is used when the samples are dependent; that is, when there is only one sample that has been tested twice (repeated measures) or when there are two samples that have been matched or "paired". This is an example of a paired difference test. The t statistic is calculated as

- [math]\displaystyle{ t = \frac{\bar{X}_D - \mu_0}{s_D/\sqrt n}, }[/math]

where [math]\displaystyle{ \bar{X}_D }[/math] and [math]\displaystyle{ s_D }[/math] are the average and standard deviation of the differences between all pairs. The pairs are e.g. either one person's pre-test and post-test scores or between-pairs of persons matched into meaningful groups (for instance, drawn from the same family or age group: see table). The constant μ0 is zero if we want to test whether the average of the difference is significantly different. The degree of freedom used is n − 1, where n represents the number of pairs.

Example of matched pairs Pair Name Age Test 1 John 35 250 1 Jane 36 340 2 Jimmy 22 460 2 Jessy 21 200 Example of repeated measures Number Name Test 1 Test 2 1 Mike 35% 67% 2 Melanie 50% 46% 3 Melissa 90% 86% 4 Mitchell 78% 91% Worked examples

This article may not properly summarize its corresponding main article. (Learn how and when to remove this template message)

This article may not properly summarize its corresponding main article. (Learn how and when to remove this template message)Let A1 denote a set obtained by drawing a random sample of six measurements:

- [math]\displaystyle{ A_1=\{30.02,\ 29.99,\ 30.11,\ 29.97,\ 30.01,\ 29.99\} }[/math]

and let A2 denote a second set obtained similarly:

- [math]\displaystyle{ A_2=\{29.89,\ 29.93,\ 29.72,\ 29.98,\ 30.02,\ 29.98\} }[/math]

These could be, for example, the weights of screws that were manufactured by two different machines.

We will carry out tests of the null hypothesis that the means of the populations from which the two samples were taken are equal.

The difference between the two sample means, each denoted by Xi, which appears in the numerator for all the two-sample testing approaches discussed above, is

- [math]\displaystyle{ \bar{X}_1 - \bar{X}_2 = 0.095. }[/math]

The sample standard deviations for the two samples are approximately 0.05 and 0.11, respectively. For such small samples, a test of equality between the two population variances would not be very powerful. Since the sample sizes are equal, the two forms of the two-sample t-test will perform similarly in this example.

Unequal variances

If the approach for unequal variances (discussed above) is followed, the results are

- [math]\displaystyle{ \sqrt{\frac{s_1^2}{n_1} + \frac{s_2^2}{n_2}} \approx 0.04849 }[/math]

and the degrees of freedom

- [math]\displaystyle{ \text{d.f.} \approx 7.031. }[/math]

The test statistic is approximately 1.959, which gives a two-tailed test p-value of 0.09077.

Equal variances

If the approach for equal variances (discussed above) is followed, the results are

- [math]\displaystyle{ s_p \approx 0.08396 }[/math]

and the degrees of freedom

- [math]\displaystyle{ \text{d.f.} = 10. }[/math]

The test statistic is approximately equal to 1.959, which gives a two-tailed p-value of 0.07857.

Related statistical tests

Alternatives to the t-test for location problems

The t-test provides an exact test for the equality of the means of two i.i.d. normal populations with unknown, but equal, variances. (Welch's t-test is a nearly exact test for the case where the data are normal but the variances may differ.) For moderately large samples and a one tailed test, the t-test is relatively robust to moderate violations of the normality assumption.[24] In large enough samples, the t-test asymptotically approaches the z-test, and becomes robust even to large deviations from normality.[16]

If the data are substantially non-normal and the sample size is small, the t-test can give misleading results. See Location test for Gaussian scale mixture distributions for some theory related to one particular family of non-normal distributions.

When the normality assumption does not hold, a non-parametric alternative to the t-test may have better statistical power. However, when data are non-normal with differing variances between groups, a t-test may have better type-1 error control than some non-parametric alternatives.[25] Furthermore, non-parametric methods, such as the Mann-Whitney U test discussed below, typically do not test for a difference of means, so should be used carefully if a difference of means is of primary scientific interest.[16] For example, Mann-Whitney U test will keep the type 1 error at the desired level alpha if both groups have the same distribution. It will also have power in detecting an alternative by which group B has the same distribution as A but after some shift by a constant (in which case there would indeed be a difference in the means of the two groups). However, there could be cases where group A and B will have different distributions but with the same means (such as two distributions, one with positive skewness and the other with a negative one, but shifted so to have the same means). In such cases, MW could have more than alpha level power in rejecting the Null hypothesis but attributing the interpretation of difference in means to such a result would be incorrect.

In the presence of an outlier, the t-test is not robust. For example, for two independent samples when the data distributions are asymmetric (that is, the distributions are skewed) or the distributions have large tails, then the Wilcoxon rank-sum test (also known as the Mann–Whitney U test) can have three to four times higher power than the t-test.[24][26][27] The nonparametric counterpart to the paired samples t-test is the Wilcoxon signed-rank test for paired samples. For a discussion on choosing between the t-test and nonparametric alternatives, see Lumley, et al. (2002).[16]

One-way analysis of variance (ANOVA) generalizes the two-sample t-test when the data belong to more than two groups.

A design which includes both paired observations and independent observations

When both paired observations and independent observations are present in the two sample design, assuming data are missing completely at random (MCAR), the paired observations or independent observations may be discarded in order to proceed with the standard tests above. Alternatively making use of all of the available data, assuming normality and MCAR, the generalized partially overlapping samples t-test could be used.[28]

Multivariate testing

A generalization of Student's t statistic, called Hotelling's t-squared statistic, allows for the testing of hypotheses on multiple (often correlated) measures within the same sample. For instance, a researcher might submit a number of subjects to a personality test consisting of multiple personality scales (e.g. the Minnesota Multiphasic Personality Inventory). Because measures of this type are usually positively correlated, it is not advisable to conduct separate univariate t-tests to test hypotheses, as these would neglect the covariance among measures and inflate the chance of falsely rejecting at least one hypothesis (Type I error). In this case a single multivariate test is preferable for hypothesis testing. Fisher's Method for combining multiple tests with alpha reduced for positive correlation among tests is one. Another is Hotelling's T2 statistic follows a T2 distribution. However, in practice the distribution is rarely used, since tabulated values for T2 are hard to find. Usually, T2 is converted instead to an F statistic.

For a one-sample multivariate test, the hypothesis is that the mean vector (μ) is equal to a given vector (μ0). The test statistic is Hotelling's t2:

- [math]\displaystyle{ t^2=n(\bar{\mathbf x}-{\boldsymbol\mu_0})'{\mathbf S}^{-1}(\bar{\mathbf x}-{\boldsymbol\mu_0}) }[/math]

where n is the sample size, x is the vector of column means and S is an m × m sample covariance matrix.

For a two-sample multivariate test, the hypothesis is that the mean vectors (μ1, μ2) of two samples are equal. The test statistic is Hotelling's two-sample t2:

- [math]\displaystyle{ t^2 = \frac{n_1 n_2}{n_1+n_2}\left(\bar{\mathbf x}_1-\bar{\mathbf x}_2\right)'{\mathbf S_\text{pooled}}^{-1}\left(\bar{\mathbf x}_1-\bar{\mathbf x}_2\right). }[/math]

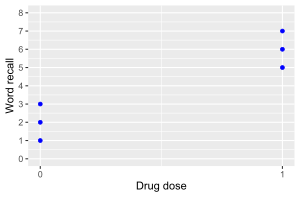

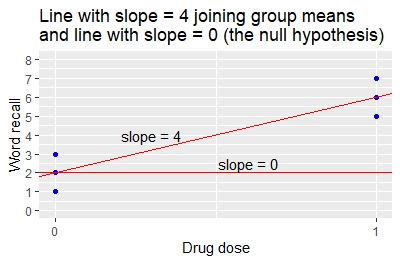

The two-sample t-test is a special case of simple linear regression

The two-sample t-test is a special case of simple linear regression as illustrated by the following example.

A clinical trial examines 6 patients given drug or placebo. Three (3) patients get 0 units of drug (the placebo group). Three (3) patients get 1 unit of drug (the active treatment group). At the end of treatment, the researchers measure the change from baseline in the number of words that each patient can recall in a memory test.

A table of the patients' word recall and drug dose values are shown below.

Patient drug.dose word.recall 1 0 1 2 0 2 3 0 3 4 1 5 5 1 6 6 1 7 Data and code are given for the analysis using the R programming language with the

t.testandlmfunctions for the t-test and linear regression. Here are the same (fictitious) data above generated in R.> word.recall.data=data.frame(drug.dose=c(0,0,0,1,1,1), word.recall=c(1,2,3,5,6,7))

Perform the t-test. Notice that the assumption of equal variance,

var.equal=T, is required to make the analysis exactly equivalent to simple linear regression.> with(word.recall.data, t.test(word.recall~drug.dose, var.equal=T))

Running the R code gives the following results.

- The mean word.recall in the 0 drug.dose group is 2.

- The mean word.recall in the 1 drug.dose group is 6.

- The difference between treatment groups in the mean word.recall is 6 – 2 = 4.

- The difference in word.recall between drug doses is significant (p=0.00805).

Perform a linear regression of the same data. Calculations may be performed using the R function

lm()for a linear model.> word.recall.data.lm = lm(word.recall~drug.dose, data=word.recall.data) > summary(word.recall.data.lm)

The linear regression provides a table of coefficients and p-values.

Coefficient Estimate Std. Error t value P-value Intercept 2 0.5774 3.464 0.02572 drug.dose 4 0.8165 4.899 0.000805 The table of coefficients gives the following results.

- The estimate value of 2 for the intercept is the mean value of the word recall when the drug dose is 0.

- The estimate value of 4 for the drug dose indicates that for a 1-unit change in drug dose (from 0 to 1) there is a 4-unit change in mean word recall (from 2 to 6). This is the slope of the line joining the two group means.

- The p-value that the slope of 4 is different from 0 is p = 0.00805.

The coefficients for the linear regression specify the slope and intercept of the line that joins the two group means, as illustrated in the graph. The intercept is 2 and the slope is 4.

Compare the result from the linear regression to the result from the t-test.

- From the t-test, the difference between the group means is 6-2=4.

- From the regression, the slope is also 4 indicating that a 1-unit change in drug dose (from 0 to 1) gives a 4-unit change in mean word recall (from 2 to 6).

- The t-test p-value for the difference in means, and the regression p-value for the slope, are both 0.00805. The methods give identical results.

This example shows that, for the special case of a simple linear regression where there is a single x-variable that has values 0 and 1, the t-test gives the same results as the linear regression. The relationship can also be shown algebraically.

Recognizing this relationship between the t-test and linear regression facilitates the use of multiple linear regression and multi-way analysis of variance. These alternatives to t-tests allow for the inclusion of additional explanatory variables that are associated with the response. Including such additional explanatory variables using regression or anova reduces the otherwise unexplained variance, and commonly yields greater power to detect differences than do two-sample t-tests.

Software implementations

Many spreadsheet programs and statistics packages, such as QtiPlot, LibreOffice Calc, Microsoft Excel, SAS, SPSS, Stata, DAP, gretl, R, Python, PSPP, Wolfram Mathematica, MATLAB and Minitab, include implementations of Student's t-test.

Language/Program Function Notes Microsoft Excel pre 2010 TTEST(array1, array2, tails, type)See [1] Microsoft Excel 2010 and later T.TEST(array1, array2, tails, type)See [2] Apple Numbers TTEST(sample-1-values, sample-2-values, tails, test-type)See [3] LibreOffice Calc TTEST(Data1; Data2; Mode; Type)See [4] Google Sheets TTEST(range1, range2, tails, type)See [5] Python scipy.stats.ttest_ind(a, b, equal_var=True)See [6] MATLAB ttest(data1, data2)See [7] Mathematica TTest[{data1,data2}]See [8] R t.test(data1, data2, var.equal=TRUE)See [9] SAS PROC TTESTSee [10] Java tTest(sample1, sample2)See [11] Julia EqualVarianceTTest(sample1, sample2)See [12] Stata ttest data1 == data2See [13] See also

- Conditional change model

- F-test – Statistical hypothesis test, mostly using multiple restrictions

- Noncentral t-distribution in power analysis – Probability distribution

- Student's t-statistic

- Z-test – Statistical test

- Mann–Whitney U test – Nonparametric test of the null hypothesis

- Šidák correction for t-test – Statistical method

- Welch's t-test – Statistical test of whether two populations have equal means

- Analysis of variance – Collection of statistical models (ANOVA)

References

- ↑ The Microbiome in Health and Disease. Academic Press. 2020-05-29. pp. 397. ISBN 978-0-12-820001-8. https://books.google.com/books?id=kiToDwAAQBAJ&pg=PA397.

- ↑ Szabó, István (2003). "Systeme aus einer endlichen Anzahl starrer Körper" (in de). Einführung in die Technische Mechanik. Springer Berlin Heidelberg. pp. 196–199. doi:10.1007/978-3-642-61925-0_16. ISBN 978-3-540-13293-6.

- ↑ Schlyvitch, B. (October 1937). "Untersuchungen über den anastomotischen Kanal zwischen der Arteria coeliaca und mesenterica superior und damit in Zusammenhang stehende Fragen" (in de). Zeitschrift für Anatomie und Entwicklungsgeschichte 107 (6): 709–737. doi:10.1007/bf02118337. ISSN 0340-2061.

- ↑ Helmert (1876). "Die Genauigkeit der Formel von Peters zur Berechnung des wahrscheinlichen Beobachtungsfehlers directer Beobachtungen gleicher Genauigkeit" (in de). Astronomische Nachrichten 88 (8–9): 113–131. doi:10.1002/asna.18760880802. Bibcode: 1876AN.....88..113H. https://zenodo.org/record/1424695.

- ↑ Lüroth, J. (1876). "Vergleichung von zwei Werthen des wahrscheinlichen Fehlers" (in de). Astronomische Nachrichten 87 (14): 209–220. doi:10.1002/asna.18760871402. Bibcode: 1876AN.....87..209L. https://zenodo.org/record/1424693.

- ↑ Pfanzagl, J. (1996). "Studies in the history of probability and statistics XLIV. A forerunner of the t-distribution". Biometrika 83 (4): 891–898. doi:10.1093/biomet/83.4.891.

- ↑ Sheynin, Oscar (1995). "Helmert's work in the theory of errors". Archive for History of Exact Sciences 49 (1): 73–104. doi:10.1007/BF00374700. ISSN 0003-9519.

- ↑ Pearson, Karl (1895). "X. Contributions to the mathematical theory of evolution.—II. Skew variation in homogeneous material". Philosophical Transactions of the Royal Society of London A 186: 343–414. doi:10.1098/rsta.1895.0010. Bibcode: 1895RSPTA.186..343P.

- ↑ 9.0 9.1 Student (1908). "The Probable Error of a Mean". Biometrika 6 (1): 1–25. doi:10.1093/biomet/6.1.1. http://seismo.berkeley.edu/~kirchner/eps_120/Odds_n_ends/Students_original_paper.pdf. Retrieved 24 July 2016.

- ↑ "T Table". https://www.tdistributiontable.com.

- ↑ Wendl, Michael C. (2016). "Pseudonymous fame". Science 351 (6280): 1406. doi:10.1126/science.351.6280.1406. PMID 27013722.

- ↑ Walpole, Ronald E. (2006). Probability & statistics for engineers & scientists. Myers, H. Raymond (7th ed.). New Delhi: Pearson. ISBN 81-7758-404-9. OCLC 818811849.

- ↑ Raju, T. N. (2005). "William Sealy Gosset and William A. Silverman: Two 'Students' of Science". Pediatrics 116 (3): 732–735. doi:10.1542/peds.2005-1134. PMID 16140715.

- ↑ Dodge, Yadolah (2008). The Concise Encyclopedia of Statistics. Springer Science & Business Media. pp. 234–235. ISBN 978-0-387-31742-7. https://books.google.com/books?id=k2zklGOBRDwC&pg=PA234.

- ↑ Fadem, Barbara (2008). High-Yield Behavioral Science. High-Yield Series. Hagerstown, MD: Lippincott Williams & Wilkins. ISBN 9781451130300.

- ↑ 16.0 16.1 16.2 16.3 Lumley, Thomas; Diehr, Paula; Emerson, Scott; Chen, Lu (May 2002). "The Importance of the Normality Assumption in Large Public Health Data Sets". Annual Review of Public Health 23 (1): 151–169. doi:10.1146/annurev.publhealth.23.100901.140546. ISSN 0163-7525. PMID 11910059.

- ↑ Markowski, Carol A.; Markowski, Edward P. (1990). "Conditions for the Effectiveness of a Preliminary Test of Variance". The American Statistician 44 (4): 322–326. doi:10.2307/2684360.

- ↑ Guo, Beibei; Yuan, Ying (2017). "A comparative review of methods for comparing means using partially paired data". Statistical Methods in Medical Research 26 (3): 1323–1340. doi:10.1177/0962280215577111. PMID 25834090.

- ↑ Bland, Martin (1995). An Introduction to Medical Statistics. Oxford University Press. p. 168. ISBN 978-0-19-262428-4. https://books.google.com/books?id=v6xpAAAAMAAJ.

- ↑ Rice, John A. (2006). Mathematical Statistics and Data Analysis (3rd ed.). Duxbury Advanced.[ISBN missing]

- ↑ Weisstein, Eric. "Student's t-Distribution". http://mathworld.wolfram.com/Studentst-Distribution.html.

- ↑ David, H. A.; Gunnink, Jason L. (1997). "The Paired t Test Under Artificial Pairing". The American Statistician 51 (1): 9–12. doi:10.2307/2684684.

- ↑ Wang, Chang; Jia, Jinzhu (2022). "Te Test: A New Non-asymptotic T-test for Behrens-Fisher Problems". arXiv:2210.16473 [math.ST].

- ↑ 24.0 24.1 Sawilowsky, Shlomo S.; Blair, R. Clifford (1992). "A More Realistic Look at the Robustness and Type II Error Properties of the t Test to Departures From Population Normality". Psychological Bulletin 111 (2): 352–360. doi:10.1037/0033-2909.111.2.352.

- ↑ Zimmerman, Donald W. (January 1998). "Invalidation of Parametric and Nonparametric Statistical Tests by Concurrent Violation of Two Assumptions". The Journal of Experimental Education 67 (1): 55–68. doi:10.1080/00220979809598344. ISSN 0022-0973.

- ↑ Blair, R. Clifford; Higgins, James J. (1980). "A Comparison of the Power of Wilcoxon's Rank-Sum Statistic to That of Student's t Statistic Under Various Nonnormal Distributions". Journal of Educational Statistics 5 (4): 309–335. doi:10.2307/1164905.

- ↑ Fay, Michael P.; Proschan, Michael A. (2010). "Wilcoxon–Mann–Whitney or t-test? On assumptions for hypothesis tests and multiple interpretations of decision rules". Statistics Surveys 4: 1–39. doi:10.1214/09-SS051. PMID 20414472. PMC 2857732. http://www.i-journals.org/ss/viewarticle.php?id=51.

- ↑ Derrick, B; Toher, D; White, P (2017). "How to compare the means of two samples that include paired observations and independent observations: A companion to Derrick, Russ, Toher and White (2017)". The Quantitative Methods for Psychology 13 (2): 120–126. doi:10.20982/tqmp.13.2.p120. http://eprints.uwe.ac.uk/31765/1/How%20to%20compare%20means......%20BD_DT_PW.pdf.

Sources

- O'Mahony, Michael (1986). Sensory Evaluation of Food: Statistical Methods and Procedures. CRC Press. p. 487. ISBN 0-82477337-3.

- Press, William H.; Teukolsky, Saul A.; Vetterling, William T.; Flannery, Brian P. (1992). Numerical Recipes in C: The Art of Scientific Computing. Cambridge University Press. p. 616. ISBN 0-521-43108-5. https://archive.org/details/numericalrecipes0865unse/page/616.

Further reading

- Boneau, C. Alan (1960). "The effects of violations of assumptions underlying the t test". Psychological Bulletin 57 (1): 49–64. doi:10.1037/h0041412. PMID 13802482.

- Edgell, Stephen E.; Noon, Sheila M. (1984). "Effect of violation of normality on the t test of the correlation coefficient". Psychological Bulletin 95 (3): 576–583. doi:10.1037/0033-2909.95.3.576.

External links

- Hazewinkel, Michiel, ed. (2001), "Student test", Encyclopedia of Mathematics, Springer Science+Business Media B.V. / Kluwer Academic Publishers, ISBN 978-1-55608-010-4, https://www.encyclopediaofmath.org/index.php?title=p/s090720

- Trochim, William M.K. "The T-Test", Research Methods Knowledge Base, conjoint.ly

- Econometrics lecture (topic: hypothesis testing) on YouTube by Mark Thoma

|