ImageNet

The ImageNet project is a large visual database designed for use in visual object recognition software research. More than 14 million[1][2] images have been hand-annotated by the project to indicate what objects are pictured and in at least one million of the images, bounding boxes are also provided.[3] ImageNet contains more than 20,000 categories,[2] with a typical category, such as "balloon" or "strawberry", consisting of several hundred images.[4] The database of annotations of third-party image URLs is freely available directly from ImageNet, though the actual images are not owned by ImageNet.[5] Since 2010, the ImageNet project runs an annual software contest, the ImageNet Large Scale Visual Recognition Challenge (ILSVRC), where software programs compete to correctly classify and detect objects and scenes. The challenge uses a "trimmed" list of one thousand non-overlapping classes.[6]

Significance for deep learning

On 30 September 2012, a convolutional neural network (CNN) called AlexNet[7] achieved a top-5 error of 15.3% in the ImageNet 2012 Challenge, more than 10.8 percentage points lower than that of the runner up. Using convolutional neural networks was feasible due to the use of graphics processing units (GPUs) during training,[7] an essential ingredient of the deep learning revolution. According to The Economist, "Suddenly people started to pay attention, not just within the AI community but across the technology industry as a whole."[4][8][9]

In 2015, AlexNet was outperformed by Microsoft's very deep CNN with over 100 layers, which won the ImageNet 2015 contest.[10]

History of the database

AI researcher Fei-Fei Li began working on the idea for ImageNet in 2006. At a time when most AI research focused on models and algorithms, Li wanted to expand and improve the data available to train AI algorithms.[11] In 2007, Li met with Princeton professor Christiane Fellbaum, one of the creators of WordNet, to discuss the project. As a result of this meeting, Li went on to build ImageNet starting from the word database of WordNet and using many of its features.[12]

As an assistant professor at Princeton, Li assembled a team of researchers to work on the ImageNet project. They used Amazon Mechanical Turk to help with the classification of images.[12]

They presented their database for the first time as a poster at the 2009 Conference on Computer Vision and Pattern Recognition (CVPR) in Florida.[12][13][14]

Dataset

ImageNet crowdsources its annotation process. Image-level annotations indicate the presence or absence of an object class in an image, such as "there are tigers in this image" or "there are no tigers in this image". Object-level annotations provide a bounding box around the (visible part of the) indicated object. ImageNet uses a variant of the broad WordNet schema to categorize objects, augmented with 120 categories of dog breeds to showcase fine-grained classification.[6] One downside of WordNet use is the categories may be more "elevated" than would be optimal for ImageNet: "Most people are more interested in Lady Gaga or the iPod Mini than in this rare kind of diplodocus."[clarification needed] In 2012 ImageNet was the world's largest academic user of Mechanical Turk. The average worker identified 50 images per minute.[2]

Subsets of the dataset

There are various subsets of the ImageNet dataset used in various context. One of the most highly used subset of ImageNet is the "ImageNet Large Scale Visual Recognition Challenge (ILSVRC) 2012-2017 image classification and localization dataset". This is also referred to in the research literature as ImageNet-1K or ILSVRC2017, reflecting the original ILSVRC challenge that involved 1,000 classes. ImageNet-1K contains 1,281,167 training images, 50,000 validation images and 100,000 test images.[15] The full original dataset is referred to as ImageNet-21K. ImageNet-21k contains 14,197,122 images divided into 21,841 classes. Some papers round this up and name it ImageNet-22k.[16]

History of the ImageNet challenge

The ILSVRC aims to "follow in the footsteps" of the smaller-scale PASCAL VOC challenge, established in 2005, which contained only about 20,000 images and twenty object classes.[6] To "democratize" ImageNet, Fei-Fei Li proposed to the PASCAL VOC team a collaboration, beginning in 2010, where research teams would evaluate their algorithms on the given data set, and compete to achieve higher accuracy on several visual recognition tasks.[12]

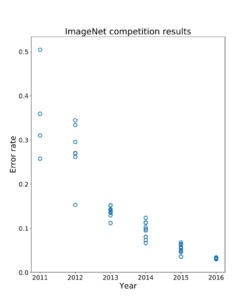

The resulting annual competition is now known as the ImageNet Large Scale Visual Recognition Challenge (ILSVRC). The ILSVRC uses a "trimmed" list of only 1000 image categories or "classes", including 90 of the 120 dog breeds classified by the full ImageNet schema.[6] The 2010s saw dramatic progress in image processing. Around 2011, a good ILSVRC classification top-5 error rate was 25%. In 2012, a deep convolutional neural net called AlexNet achieved 16%; in the next couple of years, top-5 error rates fell to a few percent.[17] While the 2012 breakthrough "combined pieces that were all there before", the dramatic quantitative improvement marked the start of an industry-wide artificial intelligence boom.[4] By 2015, researchers at Microsoft reported that their CNNs exceeded human ability at the narrow ILSVRC tasks.[10][18] However, as one of the challenge's organizers, Olga Russakovsky, pointed out in 2015, the programs only have to identify images as belonging to one of a thousand categories; humans can recognize a larger number of categories, and also (unlike the programs) can judge the context of an image.[19]

By 2014, more than fifty institutions participated in the ILSVRC.[6] In 2017, 29 of 38 competing teams had greater than 95% accuracy.[20] In 2017 ImageNet stated it would roll out a new, much more difficult challenge in 2018 that involves classifying 3D objects using natural language. Because creating 3D data is more costly than annotating a pre-existing 2D image, the dataset is expected to be smaller. The applications of progress in this area would range from robotic navigation to augmented reality.[1]

Bias in ImageNet

A study of the history of the multiple layers (taxonomy, object classes and labeling) of ImageNet and WordNet in 2019 described how bias[clarification needed] is deeply embedded in most classification approaches for of all sorts of images.[21][22][23][24] ImageNet is working to address various sources of bias.[25]

See also

References

- ↑ 1.0 1.1 "New computer vision challenge wants to teach robots to see in 3D". New Scientist. 7 April 2017. https://www.newscientist.com/article/2127131-new-computer-vision-challenge-wants-to-teach-robots-to-see-in-3d/.

- ↑ 2.0 2.1 2.2 Markoff, John (19 November 2012). "For Web Images, Creating New Technology to Seek and Find". The New York Times. https://www.nytimes.com/2012/11/20/science/for-web-images-creating-new-technology-to-seek-and-find.html.

- ↑ "ImageNet". 2020-09-07. http://image-net.org/about-stats.php.

- ↑ 4.0 4.1 4.2 "From not working to neural networking". The Economist. 25 June 2016. https://www.economist.com/news/special-report/21700756-artificial-intelligence-boom-based-old-idea-modern-twist-not.

- ↑ "ImageNet Overview". ImageNet. https://image-net.org/about.php.

- ↑ 6.0 6.1 6.2 6.3 6.4 Olga Russakovsky*, Jia Deng*, Hao Su, Jonathan Krause, Sanjeev Satheesh, Sean Ma, Zhiheng Huang, Andrej Karpathy, Aditya Khosla, Michael Bernstein, Alexander C. Berg and Li Fei-Fei. (* = equal contribution) ImageNet Large Scale Visual Recognition Challenge. IJCV, 2015.

- ↑ 7.0 7.1 Krizhevsky, Alex; Sutskever, Ilya; Hinton, Geoffrey E. (June 2017). "ImageNet classification with deep convolutional neural networks". Communications of the ACM 60 (6): 84–90. doi:10.1145/3065386. ISSN 0001-0782. https://papers.nips.cc/paper/4824-imagenet-classification-with-deep-convolutional-neural-networks.pdf. Retrieved 24 May 2017.

- ↑ "Machines 'beat humans' for a growing number of tasks". Financial Times. 30 November 2017. https://www.ft.com/content/4cc048f6-d5f4-11e7-a303-9060cb1e5f44.

- ↑ Gershgorn, Dave (18 June 2018). "The inside story of how AI got good enough to dominate Silicon Valley". https://qz.com/1307091/the-inside-story-of-how-ai-got-good-enough-to-dominate-silicon-valley/.

- ↑ 10.0 10.1 He, Kaiming; Zhang, Xiangyu; Ren, Shaoqing; Sun, Jian (2016). "Deep Residual Learning for Image Recognition". 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR). pp. 770–778. doi:10.1109/CVPR.2016.90. ISBN 978-1-4673-8851-1.

- ↑ Hempel, Jesse (13 November 2018). "Fei-Fei Li's Quest to Make AI Better for Humanity". Wired. https://www.wired.com/story/fei-fei-li-artificial-intelligence-humanity/. Retrieved 5 May 2019. "When Li, who had moved back to Princeton to take a job as an assistant professor in 2007, talked up her idea for ImageNet, she had a hard time getting faculty members to help out. Finally, a professor who specialized in computer architecture agreed to join her as a collaborator.".

- ↑ 12.0 12.1 12.2 12.3 Gershgorn, Dave (26 July 2017). "The data that transformed AI research—and possibly the world". Atlantic Media Co.. https://qz.com/1034972/the-data-that-changed-the-direction-of-ai-research-and-possibly-the-world/. "Having read about WordNet's approach, Li met with professor Christiane Fellbaum, a researcher influential in the continued work on WordNet, during a 2006 visit to Princeton."

- ↑ Deng, Jia; Dong, Wei; Socher, Richard; Li, Li-Jia; Li, Kai; Fei-Fei, Li (2009), "ImageNet: A Large-Scale Hierarchical Image Database", 2009 conference on Computer Vision and Pattern Recognition, http://www.image-net.org/papers/imagenet_cvpr09.pdf, retrieved 26 July 2017

- ↑ Li, Fei-Fei (23 March 2015), How we're teaching computers to understand pictures, https://www.ted.com/talks/fei_fei_li_how_we_re_teaching_computers_to_understand_pictures?language=en, retrieved 16 December 2018

- ↑ "ImageNet". https://www.image-net.org/download.php.

- ↑ Ridnik, Tal; Ben-Baruch, Emanuel; Noy, Asaf; Zelnik-Manor, Lihi (2021-08-05). "ImageNet-21K Pretraining for the Masses". arXiv:2104.10972 [cs.CV].

- ↑ Robbins, Martin (6 May 2016). "Does an AI need to make love to Rembrandt's girlfriend to make art?". The Guardian. https://www.theguardian.com/science/2016/may/06/does-an-ai-need-to-make-love-to-rembrandts-girlfriend-to-make-art.

- ↑ Markoff, John (10 December 2015). "A Learning Advance in Artificial Intelligence Rivals Human Abilities". The New York Times. https://www.nytimes.com/2015/12/11/science/an-advance-in-artificial-intelligence-rivals-human-vision-abilities.html.

- ↑ Aron, Jacob (21 September 2015). "Forget the Turing test – there are better ways of judging AI". New Scientist. https://www.newscientist.com/article/dn28206-forget-the-turing-test-there-are-better-ways-of-judging-ai/.

- ↑ Gershgorn, Dave (10 September 2017). "The Quartz guide to artificial intelligence: What is it, why is it important, and should we be afraid?". Quartz. https://qz.com/1046350/the-quartz-guide-to-artificial-intelligence-what-is-it-why-is-it-important-and-should-we-be-afraid/.

- ↑ "The Viral App That Labels You Isn't Quite What You Think". Wired. ISSN 1059-1028. https://www.wired.com/story/viral-app-labels-you-isnt-what-you-think/. Retrieved 22 September 2019.

- ↑ Wong, Julia Carrie (18 September 2019). "The viral selfie app ImageNet Roulette seemed fun – until it called me a racist slur". The Guardian. ISSN 0261-3077. https://www.theguardian.com/technology/2019/sep/17/imagenet-roulette-asian-racist-slur-selfie.

- ↑ Crawford, Kate; Paglen, Trevor (19 September 2019). "Excavating AI: The Politics of Training Sets for Machine Learning". https://www.excavating.ai/.

- ↑ Lyons, Michael (24 December 2020). "Excavating "Excavating AI": The Elephant in the Gallery". arXiv:2009.01215.

- ↑ "Towards Fairer Datasets: Filtering and Balancing the Distribution of the People Subtree in the ImageNet Hierarchy". 17 September 2019. http://image-net.org/update-sep-17-2019.php.

External links

|